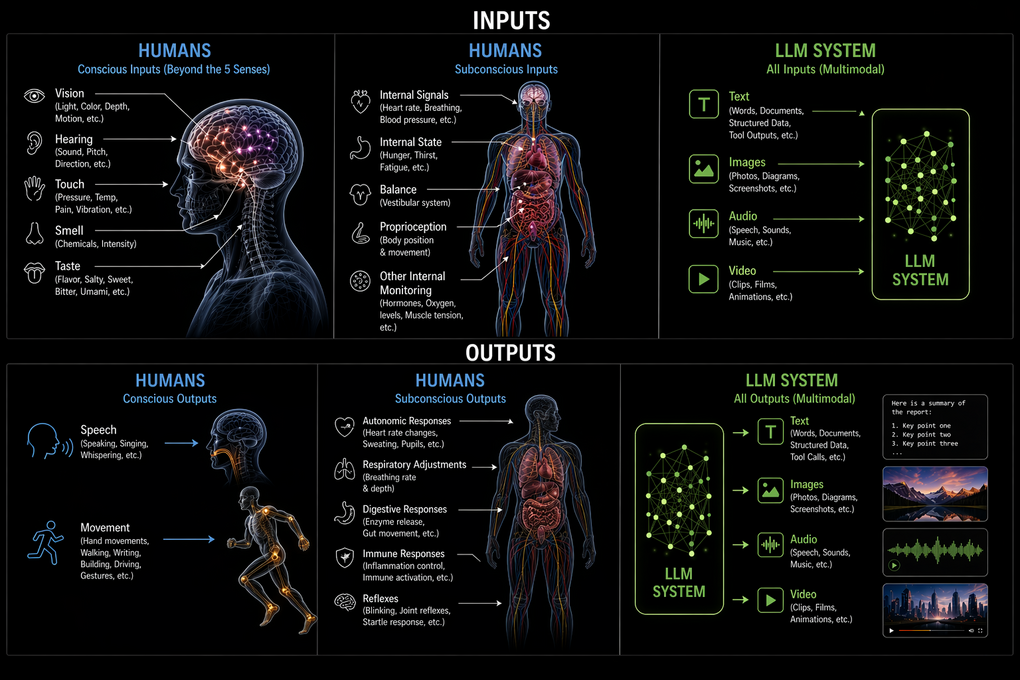

Before we start talking about AGI, let’s figure out what we really mean by intelligence and the mechanics of intelligence. I’ll start simple – Both humans and LLMs act on inputs and produce outputs. We all agree computers take input and give output. Humans do the same. Let’s compare what that actually looks like.

Human Inputs

Humans have five senses: sight, hearing, touch, taste, and smell. That’s a gross oversimplification. Even *touch* isn’t just one thing – it’s pressure, temperature, pain, vibration

And that’s just the easy stuff. Humans are also getting constant internal data, let’s call this system diagnostics. Hunger, Thirst, Fatigue, Heart rate, Breathing state, Hormonal signals.

They affect your output, whatever that may need to be – all without you consciously thinking about them. Then there are subconscious senses most people don’t think about – Balance, Proprioception, Internal state monitoring (like muscle tension or oxygen levels).

We are, in practice, high-bandwidth multi-modal biological input systems

LLM Inputs

Modern LLMs can take in a mix of Text, Images, Audio. The text inputs themselves can be unstructured text, structured data or responses from tools.

Human Outputs

Human outputs fall into two big buckets. Conscious Outputs – Speech + Movement. Everything you create – writing, music, art, building something, typing – is *ultimately just movement and/or voice.* When you type a sentence, you’re orchestrating muscles. Technically, voice is also movement but for practical purposes, let’s just say our outputs are movement and sound.

Subconscious Outputs: Humans also produce outputs we hardly notice like

Heart rate adjustments, Breathing changes, Hormonal regulation, Reflexes. These are outputs just as much as speech or motion. They keep the system alive and stable, even if you never think about them.

LLM Outputs

An LLM can produce text, images, audio or video and text can include structured data or calls to tools that trigger actions in software.

Same Structure, Different Mediums

Looked at plainly. Humans and LLMs take in inputs and produce outputs. The channels differ. The hardware differs. The formats differ. And humans are continuously taking in inputs and producing outputs. LLMs on the other hand don’t do that by default. You could technically set them up to take continuous input streams and produce outputs.

Framing humans and AI in terms of inputs and outputs does push the conversation of intelligence into practical territory. If we’re all just processing inputs to produce outputs, what is it that makes us intelligent and LLMs not? Stay tuned for the next part of this series.

While you wait for part 2, think about this. Let’s say we have a robot with an LLM that continuously receives its battery state as an input. When the state falls below 20%, it recharges the battery. How different is that from you eating when you get the hunger signal?